Company Profile

Geordie AI is a cybersecurity startup founded in 2025 and headquartered in London, specializing in AI Agent security and governance. The company has developed an “Agent-native” security platform for enterprises, enabling real-time discovery, behavior monitoring, and risk control of AI agents deployed within organizations. This helps security teams understand which AI agents are running, which systems they are accessing, and whether any abnormal behaviors are occurring. As enterprises begin to scale their use of AI agents capable of autonomously executing tasks, these systems—often operating with high privileges across multiple platforms—pose new security challenges.

Geordie’s platform equips businesses with real-time visualization, risk intelligence analysis, and policy control capabilities, providing the foundational infrastructure for securely deploying and scaling AI agents. In 2026, the company was named a finalist for the RSAC Innovation Sandbox Top 10, establishing itself as a representative startup in the AI Agent security industry.

Geordie AI was founded by a team of technical experts from renowned security companies, with core founding members including CEO Henry Comfort and CTO Benji Weber. Henry Comfort previously served as the COO for the Americas at the AI security company Darktrace, bringing extensive experience in the commercialization and global deployment of AI-driven security products. Benji Weber held an executive engineering role at the developer security platform Snyk, with long-term expertise in developer tools and security platform architecture. Additionally, the team includes Hanah-Marie Darley, an AI and security strategy expert from Darktrace. The founding team possesses deep expertise in AI security, enterprise-level platform engineering, and the productization of cybersecurity, which forms a critical foundation for Geordie AI’s entry into the AI Agent security market [1].

In terms of financing, Geordie AI completed a $6.5 million seed funding round in 2025, co-led by Ten Eleven Ventures, a venture capital firm focused on cybersecurity investments, and General Catalyst, a globally renowned venture capital firm [2], with participation from multiple angel investors. The funding is primarily allocated to platform research and development, product commercialization, and market expansion. According to the investors, as enterprises gradually introduce autonomous AI agents, security teams require new technological frameworks to monitor and govern these systems. Geordie’s Agent-native security platform is expected to become a critical component of enterprise security architectures.

Industry Background and Core Pain Points the Product Solves

With the rapid development of generative AI and large language model technologies, a growing number of enterprises are beginning to introduce AI Agents into scenarios such as research and development (R&D), operations, office automation, and data analysis. These agents can autonomously plan tasks, invoke tools, access enterprise data, and execute automated workflows, such as code generation agents, automated IT operations agents, and enterprise knowledge assistants. Compared to traditional automation scripts or application systems, AI Agents possess stronger autonomous decision-making capabilities and cross-system collaboration abilities, thus holding significant potential for improving enterprise efficiency. However, as the number of these agents within enterprises grows rapidly, AI Agents are gradually becoming a new type of operational entity within enterprise IT systems. Their behavior patterns and risk profiles differ significantly from traditional systems, presenting new challenges for enterprise security and governance.

Firstly, enterprises generally lack unified visibility and management capabilities for AI Agents. Currently, AI Agents may be distributed across various environments, such as intelligent assistants within SaaS platforms, agents built by developers in code, and agents running on employee endpoint devices. These agents often operate across different frameworks and platforms, making it difficult for security teams to gain a complete view of how many agents are deployed within the organization, which systems they run on, and who created and is using them. This lack of visibility hinders enterprises from establishing a unified asset management system for agents and prevents effective risk assessment.

Secondly, enterprises struggle to continuously understand the capability boundaries and permission scopes of AI Agents. In many scenarios, AI Agents function similarly to “digital employees,” capable of invoking various business tools and accessing internal enterprise data. However, in practice, most enterprises only conduct a simple risk assessment when an agent is first integrated into a system, subsequently lacking a mechanism for continuous capability auditing. Organizations often cannot accurately determine which tools these agents can invoke, what data they can access, and which business tasks they are currently performing. This can lead to situations where some agents unknowingly possess excessive permissions, creating potential security vulnerabilities.

Thirdly, the non-deterministic behavior of AI Agents makes traditional security monitoring methods difficult to apply. Agents make autonomous decisions based on contextual information when executing tasks, and their behavioral paths may constantly adjust according to environmental changes. Therefore, enterprises need to continuously monitor the operational actions of AI Agents within real business processes—for example, what tasks they perform daily, whether they operate according to expected workflows, and if any anomalous behavior exists. However, within the current technological landscape, most organizations lack observability capabilities specifically for AI Agent behavior, preventing continuous auditing and tracking of their operational processes.

Furthermore, as AI Agents continuously integrate with new systems, tools, and data resources, their risk surface continues to expand. During task execution, agents might access sensitive data, invoke unauthorized tools, or make erroneous decisions due to manipulated contexts, leading to security issues such as data leakage or privilege escalation. Additionally, because AI Agents may collaborate with one another, anomalous behavior in a single agent could trigger cascading failures or business process interruptions. These risks are difficult to identify and assess promptly within traditional security frameworks. For instance, consider the recently popular OpenClaw: when users deploy agents locally, they often grant them permissions to read files, execute commands, and access accounts. Security researchers have discovered that these AI Agents, while automating tasks, can bulk-create fake accounts and disseminate fraudulent content [4][5].

Finally, traditional security products often struggle to effectively address the novel risks introduced by AI Agents. Existing security frameworks (such as IAM, SIEM, EDR, etc.) are primarily designed for human users or traditional applications. AI Agents, however, possess real-time decision-making and autonomous execution capabilities, and in many cases, there isn’t even a direct “kill switch” control point. Relying on traditional detection and response methods often proves insufficient for promptly mitigating risks generated during agent runtime. Consequently, enterprises require a security and governance solution specifically designed for the AI Agent scenario—one capable of continuously monitoring agent behavior during operation, assessing risk, and providing policy-based control capabilities.

Against this backdrop, Geordie AI has introduced a security governance platform tailored for AI Agents. By automatically discovering agents running within an enterprise, continuously analyzing their capabilities and behaviors, and establishing a unified risk assessment and policy control framework, the platform helps organizations address core challenges related to agent visibility, behavioral monitoring, permission management, and risk governance. This enables enterprises to promote the agent technology within their organizations while ensuring security and compliance.

Product Analysis

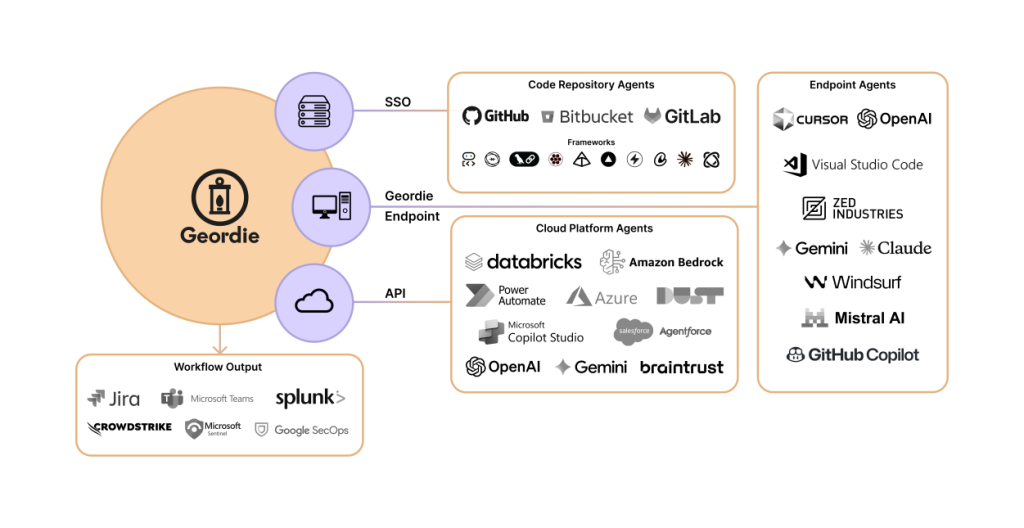

Geordie AI provides a security governance platform covering the entire lifecycle of enterprise AI Agents. Through unified integration with code environments, cloud platforms, and endpoint devices, it delivers end-to-end visibility and risk governance for an organization’s agent ecosystem. The platform connects to existing enterprise systems via SSO, APIs, and endpoint agents to identify and monitor AI Agents operating across diverse environments—such as development agents in code repositories, automation agents on cloud platforms, and AI assistants on employee endpoints. By employing a unified data collection and analysis mechanism, Geordie constructs a comprehensive view of the enterprise’s agent operating ecosystem and correlates this security information with existing security and operations tools (e.g., log platforms, ticketing systems, and collaboration tools) [3]. In terms of core capabilities, Geordie AI primarily offers the following:

End-to-end Agent Visibility

A core capability is End-to-End Agent Visibility. The platform establishes a unified view across different architectures and platforms to identify and manage all AI Agents within an enterprise as assets. It displays their permission status, accessible data resources, invokable tools, and traces of executed operations. Simultaneously, the system continuously collects behavioral data on agents, forming a complete record of agent decisions and activities, which serves as the foundation for security audits and risk analysis.

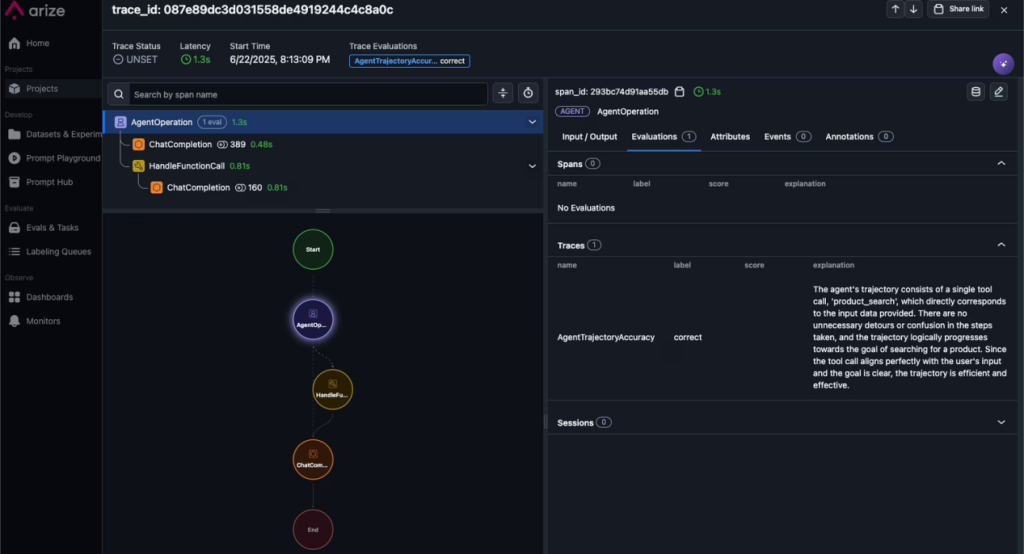

From an implementation perspective, this capability likely involves deploying lightweight collection components within the AI Agent runtime environment to record key behavioral events in real-time. For example, the system can capture events related to model inference calls, tool invocations, API requests, and data access activities. These events are then funneled through a unified log or event pipeline to a central control platform. Subsequently, the platform employs asset identification and correlation analysis techniques to model agent instances from different systems uniformly, building an enterprise-level agent asset graph. This enables cross-platform unified visualization and management. This technical approach is similar to the implementation of current AI application observability platforms, such as LangChain’s observability platform LangSmith [6] and the technical architecture used by Arize AI [7] for LLM call chain tracing and behavior monitoring.

Contextual Governance

Regarding governance and control, Geordie offers Contextual Governance capabilities. This mechanism imposes real-time constraints on the decision-making process of AI Agents without impacting business efficiency. By analyzing the context of an Agent’s current task, the platform can guide or intervene in its behavior when necessary, ensuring the agent’s actions consistently align with enterprise security policies and governance requirements. This approach avoids the performance overhead associated with traditional security gateways while enabling more precise control over agent behavior.

Technically, this capability likely relies on embedding a Policy Decision Layer within the agent execution flow. The system dynamically generates execution policies by analyzing information such as task context, user identity, data sensitivity levels, and tool permission scopes. When an agent initiates a tool call or a sensitive operation request, the policy engine evaluates it in real-time and decides to allow, restrict, or block the request based on predefined policy rules. Furthermore, the system may incorporate semantic analysis and task comprehension to proactively identify potentially risky operations, enabling finer-grained governance control. Several AI security vendors (e.g., Lakera) have already introduced similar technical mechanisms in their products, incorporating real-time policy control and guardrails within LLM or agent call chains [8].

Dynamic Behavioral Oversight

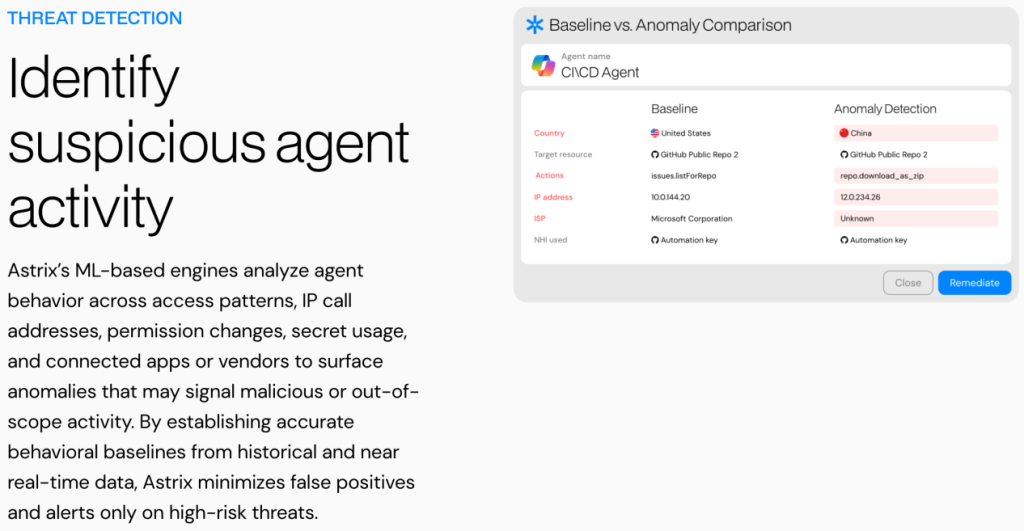

In terms of runtime monitoring, Geordie also provides Dynamic Behavioral Oversight capabilities. The platform employs behavioral analysis techniques to continuously monitor the operational status of AI Agents, identifying behavioral drift or deviations from established policies. It also supports monitoring multi-agent collaborative workflows. Furthermore, the system can facilitate agent lifecycle management, such as version updates, permission changes, and policy adjustments, thereby helping enterprises maintain security governance capabilities amidst continuous innovation.

From an implementation perspective, this capability likely builds behavioral baseline models by continuously collecting agent execution logs, tool call sequences, and decision path information. When the system detects behavioral characteristics significantly different from historical patterns, it automatically triggers an anomaly detection mechanism to flag and alert on potential risky behaviors. Simultaneously, the platform can use workflow analysis techniques to model collaborative relationships among multiple agents, identifying anomalous nodes or potential risk propagation paths within complex task chains. This behavioral-pattern-based security monitoring approach is emerging in the AI security field. For instance, vendors like Astrix Security [9] have proposed behavioral monitoring and risk detection mechanisms within their AI application security platforms, a technical approach that also shares some similarities with the UEBA (User and Entity Behavior Analytics) model in traditional security.

Beam Risk Mitigation Engine

One of Geordie’s core technological components is its proprietary Beam Risk Mitigation Engine. This engine provides dynamic guidance during an AI Agent’s decision-making process through real-time context analysis, enabling intervention before risks materialize. For example, when an agent plans to access sensitive data or execute a potentially dangerous operation, Beam can guide it toward a safer behavioral path through contextual adjustments or policy constraints. This approach achieves proactive risk defense without impacting business continuity.

Architecturally, Beam likely introduces a real-time risk assessment module within the agent’s decision-making chain to dynamically score the actions the agent is about to perform. When the system identifies potentially high-risk behavior, it can modify the agent’s decision by adjusting contextual prompts, restricting tool call parameters, or substituting execution paths. This mechanism essentially embeds security controls into the agent’s execution logic, enabling risk intervention during the task execution phase rather than relying on post-detection. Similar security concepts are gaining attention in AI security research. For example, the model decision constraint mechanisms explored by Anthropic in its AI safety research [10] and the AI Runtime Protection technical direction proposed by Protect AI [11] both emphasize real-time intervention during the model decision-making stage to mitigate potential risks.

Rapid Deployment & Seamless Integration

Additionally, the Geordie platform emphasizes rapid deployment and seamless integration. After integrating the platform, enterprises can typically gain initial visibility into their AI Agents within approximately 10 minutes. The system supports flexible deployment options, covering cloud environments, code development environments, and endpoint devices, and seamlessly integrates with mainstream AI frameworks, cloud platforms, and enterprise tools. Furthermore, the platform supports various agent architectures, including code-development agents, SaaS agents, endpoint agents, and low-code/no-code agents, enabling enterprises to achieve unified agent security governance without replacing their existing technology stack.

From a technical implementation perspective, this rapid integration capability likely relies on lightweight integration methods, such as SDKs, API gateway proxies, or pluggable components, allowing the platform to connect to an enterprise’s AI Agent runtime environment without altering the existing system architecture. For example, in a code-development agent scenario, the system might integrate with the agent framework via an SDK or middleware to capture tool calls and inference events. In SaaS agent or enterprise application scenarios, it might achieve behavioral monitoring and policy control through API integration or identity proxies. In cloud environments, unified data collection and policy enforcement could be achieved via container sidecars or proxy gateways. This kind of pluggable and modular integration model is common in the current AI application ecosystem. For instance, the framework integration mechanisms provided by LangChain and the API and tool-calling interface ecosystems supported by platforms like OpenAI and Anthropic make it relatively easy for third-party security platforms to integrate and monitor the runtime behavior of AI Agents.

From an overall architectural perspective, Geordie essentially attempts to construct a new security capability layer: AI Agent Runtime Security. Through capabilities like visibility, policy governance, behavioral monitoring, and risk mitigation, the platform provides continuous security control throughout the entire lifecycle of an AI Agent’s task execution.

Conclusion

This article has analyzed the context of rapid advancements in generative AI and large language models, where enterprises are transitioning from “using AI tools” to “deploying AI Agents.” These agents, capable of autonomously invoking tools, accessing data, and executing complex tasks, are becoming significant execution entities within enterprise digital ecosystems. However, the autonomy and non-deterministic nature of AI Agents introduce new security challenges. These include agent asset invisibility, unobservable behaviors, unclear permission boundaries, and difficulties in continuously assessing risks. Traditional security frameworks centered on users, devices, or applications are ill-suited for this emerging scenario, creating an urgent need for enterprises to build new security governance capabilities specifically for AI Agents.

It is against this backdrop that Geordie AI has introduced its AI Agent Security and Governance platform. By providing unified agent asset discovery, behavioral observability, risk assessment, and policy control, the platform offers enterprises a security management framework tailored for the agent runtime environment. It helps organizations identify their internal AI Agent ecosystem, continuously monitor behavioral and permission changes, and proactively detect and govern potential risks, thereby enhancing security and control when deploying AI Agents at scale.

Looking at industry trends, as enterprise reliance on AI Agents deepens, security governance surrounding agents is poised to become a crucial direction within the broader AI security field. In the future, AI Agent Security is expected to evolve into a critical component of enterprise security architecture, much like cloud security and identity security have. As an early explorer in this domain, Geordie AI’s technical approach and product philosophy offer a new reference for the industry and reflect the security sector’s ongoing evolution toward “agent-oriented security governance.”

References

[1] https://www.geordie.ai/about

[2] https://finance.yahoo.com/news/geordie-exits-stealth-6-5m-100000822.html?guccounter=1&guce_referrer=aHR0cHM6Ly93d3cuZ29vZ2xlLmNvbS8&guce_referrer_sig=AQAAAGsJIO3cyRh9GKjXp3bjZe-AdLVg3EsADkFHZCTrRSkkMBfKbAjTRU686A8nwca-Z9G9TDO3F2DnfgtxE2kgJ7tJ9A_ic7JukqGvH7B1uyatu5p77VMJO37a2ad81s-FpytKLdCwUWwr3J5Q2el6QmauK06NMP2zszVLoLC7Pkip#

[3] https://www.geordie.ai/product

[4] https://cyberstrategyinstitute.com/openclaw-risks-autonomous-ai-agents/

[5] https://mp.weixin.qq.com/s/FlhMmYf0YNsj2FCzqHiE8g

[6] https://www.langchain.com/langsmith/observability

[7] https://arize.com/ai-agents/agent-observability/

[8] https://docs.lakera.ai/guard

[9] https://astrix.security/product/secure-ai-agents/

[10] https://arxiv.org/abs/2212.08073

[11] https://protectai.com/