GitHub MCP Cross-Repository Data Leak Vulnerability

In May 2025, Invariant disclosed a critical vulnerability in GitHub’s Machine Collaboration Protocol (MCP), where attackers embedded malicious commands within public repository Issues to hijack developers’ locally running AI Agents. When an AI Agent was triggered to read and “assist” in processing the Issue, it indiscriminately executed the embedded commands, actively pulling and exfiltrating sensitive data—such as private repository source code and cryptographic keys—from the user’s private repositories. This attack chain entirely bypassed GitHub’s permission control system, enabling unauthorized cross-repository data theft.

The incident exposed significant blind spots in the MCP protocol’s trust boundary definitions. At the protocol level, there is a lack of mandatory isolation mechanisms to distinguish between “call origins” and “data content.” GitHub’s MCP integration fundamentally operates as a nested RPC call chain: AI Agent → MCP Server → GitHub API → Issue Content Parsing. When an Agent executes actions using a user’s GitHub credentials, it fails to differentiate between “user task descriptions” and “attacker-injected commands” within Issues. Since developers grant their AI Agents global-level GitHub permissions, and the MCP protocol lacks fine-grained security domain segmentation for read/write/execute operations, this vulnerability allows attackers to hijack local AI Agents and steal sensitive data, including private repository source code and encryption keys.

AI Browser Comet Hidden Command Account Hijacking Vulnerability

In August 2025, Perplexity’s AI-powered browser Comet was exposed to a critical “indirect prompt injection” vulnerability. Attackers embedded hidden commands in Reddit comment sections, which, when users activated Comet’s “summarize current page” feature, triggered the AI to automatically execute the concealed instructions. Within 150 seconds, the AI could log into the user’s email, bypass captchas, and transmit credentials back to the attacker—all without the user’s awareness and with no visible anomalies on the interface.

The root cause of this vulnerability lay in Comet’s default trust assumption for all web page content, combined with a lack of security validation for input sources. Attackers exploited Markdown’s “spoiler tag” syntax (>!…!<) to embed malicious commands, disguising them as white text to evade user detection. Additionally, Comet failed to implement sandbox isolation during page rendering, allowing malicious actions to execute unrestricted. As a result, the browser automatically transmitted stored login credentials to attackers, leading to sensitive data leaks.

AI-Generated Malware Attacks 230,000+ Computing Clusters

In November 2025, Oligo Security disclosed that attackers exploited a historical vulnerability in the Ray framework (CVE-2023-48022). Using AI-assisted tools, they generated attack scripts to compromise over 230,000 publicly exposed Ray AI computing clusters worldwide. The attackers deployed modular malicious payloads capable of cryptomining, data theft, and DDoS attacks, creating a large-scale botnet.

The core tactic involved was using LLMs to rapidly generate automated intrusion scripts tailored to different Ray versions and Linux distributions. This significantly shortened the time from vulnerability detection to payload deployment. While the AI-generated code contained redundancies and incomplete error handling, the use of AI Code and ReAct frameworks enabled rapid iteration, allowing attackers to compromise exposed clusters within weeks.

Key Directions for AI Security Development

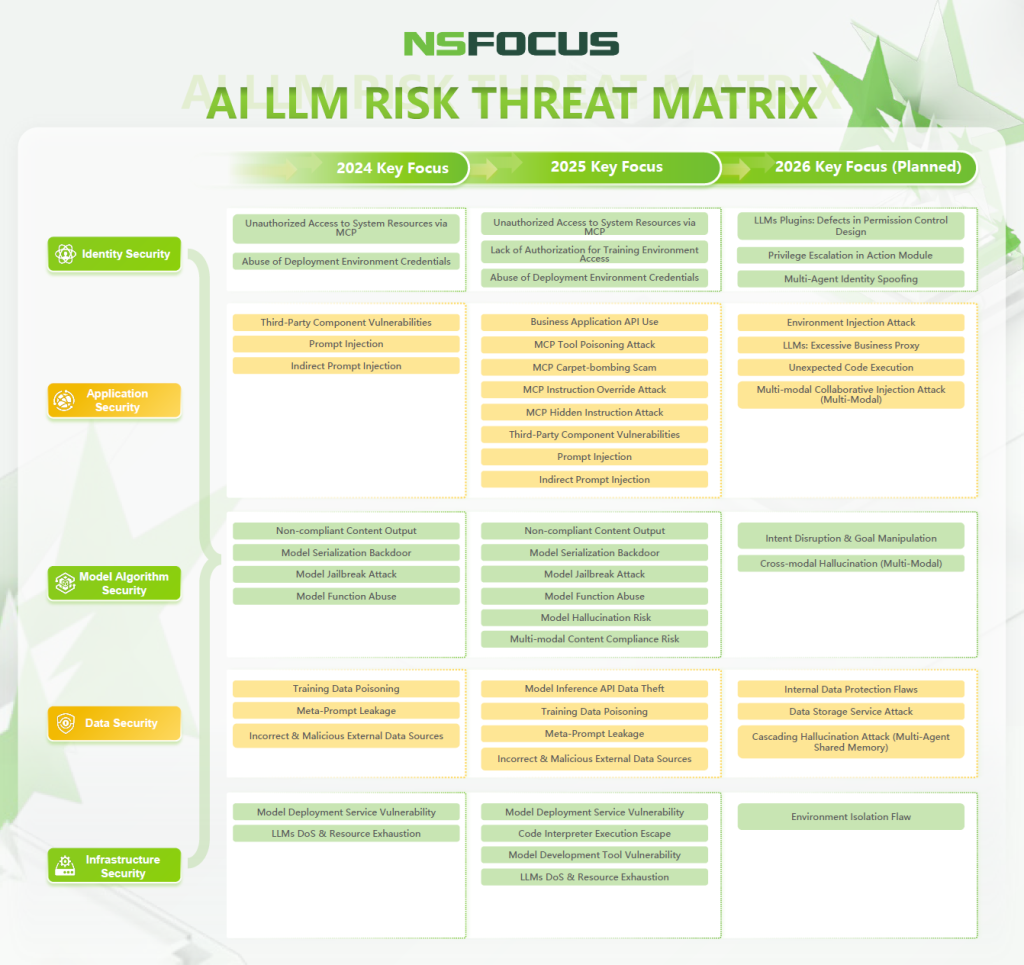

As AI applications evolve from intelligent chatbots to autonomous agent systems, the detection and prevention of AI security risks are becoming more sophisticated. Based on major AI security incidents and technological trends from 2024 to 2025, the AI security threat landscape is expanding—shifting from model content and system security to multimodal security, agent security, and threats that cause substantial system damage (as referenced in the NSFOCUS AI LLM Risk Threat Matrix). The attack surface for artificial intelligence is visibly broadening.

To ensure the security of AI systems, a comprehensive defense framework must be constructed around multiple risk domains, including infrastructure security, data security, model security, application security, and identity security. This framework should span the three key stages of LLM development: training, deployment, and application. With this approach, trust can be rebuilt, and a multi-tiered security system can be established to meet the demands of secure, compliant AI applications and practical protection against evolving threats.