On February 20, 2026, AI company Anthropic released a new code security tool called Claude Code Security. This release coincided with the highly sensitive period of global capital markets to AI technology subverting the traditional software industry, which quickly triggered violent fluctuations in the capital market and caused the fall of stock prices of major US cybersecurity companies. The tool is positioned as a built-in security feature of Claude Code and is currently available to enterprise and team customers in the form of a limited research preview, while providing accelerated access channels for open source project maintainers. From the perspective of technological evolution, the release of Claude Code Security represents the latest progress in AI’s security offense and defense.

NSFOCUS has long had a profound insight into the transformation of intelligent attack and defense confrontation, and has taken the lead in deeply integrating AI technology into the construction of security capabilities. Market participants are generally concerned that as AI technology continues to improve its capabilities in vulnerability discovery, threat detection and automated response, the products and services of traditional cybersecurity companies may be directly replaced by AI tools. We believe that this fear stems from a vague understanding of the boundaries of AI capabilities and concerns about uncertainty about the speed of technological evolution. The cybersecurity industry will become a net beneficiary of AI technology. AI will not replace cybersecurity products, but will enhance security defense capabilities and operational efficiency. In this post, NSFOCUS combines its own intelligent attack and defense practices to conduct a technical analysis of the new code security tool Claude Code Security released by Anthropic.

Claude Code Security Technical Analysis

Claude Code Security is a secure code auditing solution built into the Claude Code and CI/CD pipelines. This solution can be seen as a milestone in Anthropic’s transformation of cutting-edge research into defensive tools. It not only integrates the native reasoning capabilities of Claude 4.6, but also integrates the core research results of Anthropic’s internal red team Frontier Red Team in complex network attack and defense, 0-day vulnerability exploitation and national security assessment. Compared with the traditional static analysis tool (SAST), this tool adopts a more intelligent vulnerability detection method. It does not rely on a predefined rule base, but simulates the thinking mode of human security researchers to deeply understand the context logic, data flow path and component interaction relationship of the code, so as to discover complex vulnerabilities that are difficult for traditional tools to capture. This choice of technology path reflects the paradigm shift of AI from “rule-based” to “reasoning-based” in the field of security.

1.1 Team Background and Research Results

The core development team of Claude Code Security consists of Anthropic Labs and Frontier Red Team. Anthropic Labs is led by Chief Product Officer Mike Krieger, who is responsible for turning “experimental capabilities” into production-grade tools. The “Claude Write Claude” model implemented internally has enabled the model to be highly autonomously audited for several months in 12.5 million-row projects (such as vLLM) before release. The Frontier Red Team is responsible for research related to the Anthropic Cybersecurity Red Team. The team not only analyzes the risks of models to cybersecurity, biosecurity and autonomous systems, but also directly develops Agent frameworks (such as Incalmo) for vulnerability detection. The cybersecurity capabilities of the model are mainly undertaken by its Cybersecurity RL team, which is affiliated with Anthropic’s Horizons department and is committed to safely advancing the capability boundaries of AI models in the field of cybersecurity. The team’s research directions include secure coding, vulnerability remediation and other areas of defensive cybersecurity, using reinforcement learning (RL) as the main technical means. We have sorted out the main achievements of Anthropic in the field of cybersecurity in the past year, involving intelligent code security auditing and vulnerability mining, intelligent network penetration, and DFIR digital forensics and incident response.

1.2 Preview Version Function

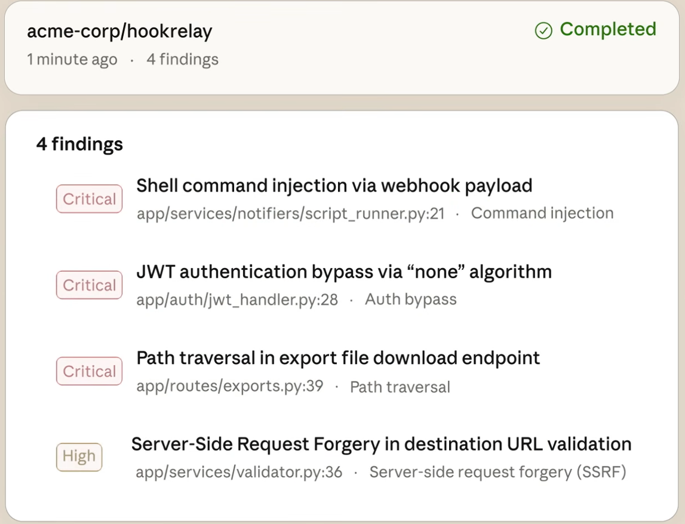

From the introduction of Anthropic’s official website and limited video demonstrations, it can be seen that Claude Code Security has three main capabilities: code repository-level automatic auditing capabilities, code vulnerability analysis and verification capabilities, and vulnerability repair suggestions and automated PR capabilities.

1. Code repository-level automatic audit

Developers can request AI to conduct code audits on the entire code base by connecting to the code repository.

2. Code vulnerability analysis and verification

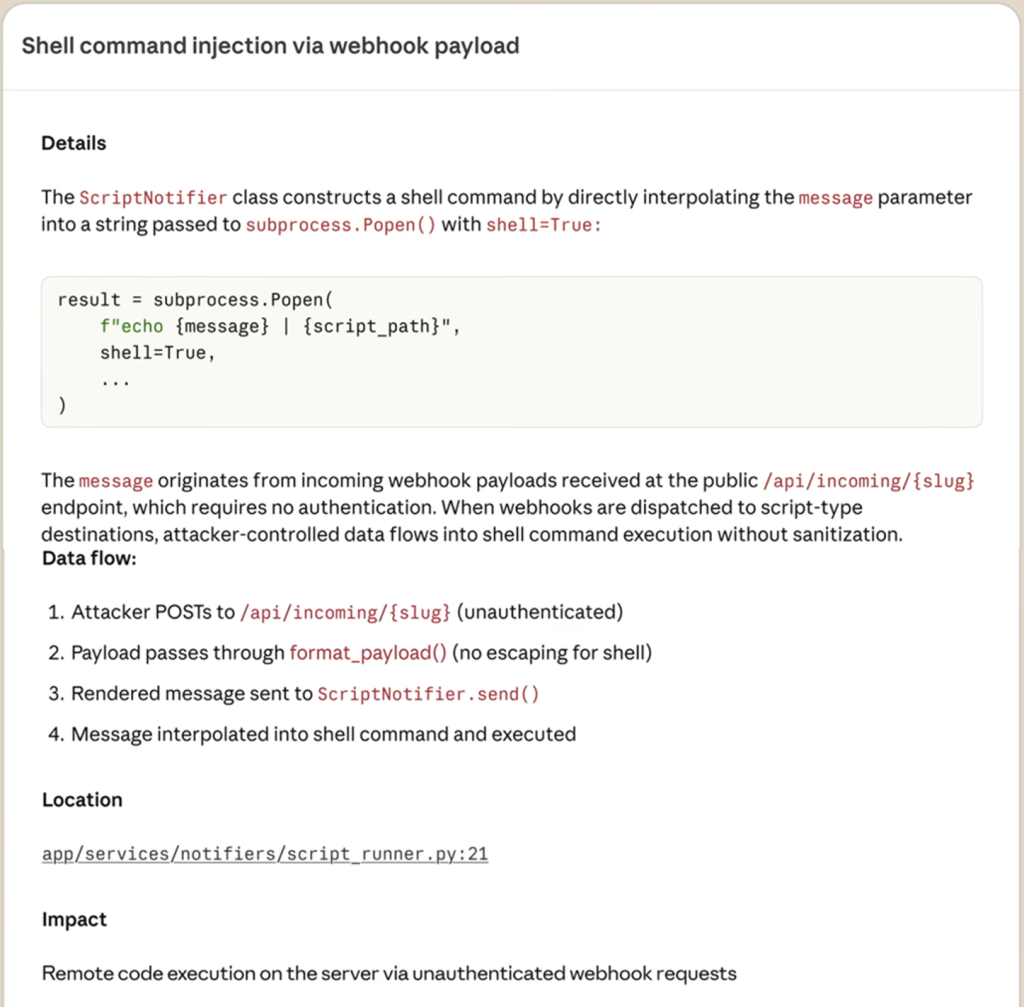

The vulnerability report presented by it contains the following 4 parts:

- Detail: In-depth text explanation of the cause, attack surface and potential impact of the vulnerability.

- Data Flow: Trace the transmission path of untrusted input (Taint) between program components like a human researcher, and identify complex business logic errors.

- Location: Accurately indicate the specific code line, file and affected module where the vulnerability occurs.

- Impact: vulnerability metric assessment, attack complexity, attack vectors, permission requirements, user interaction and impact range, etc.

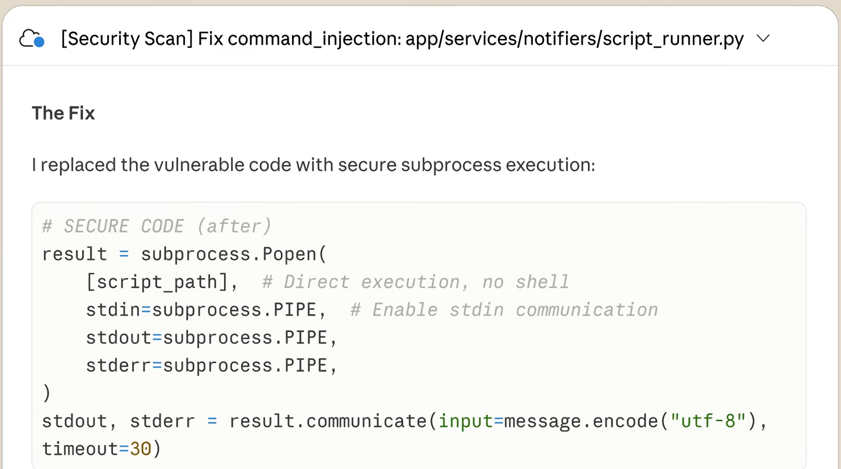

3. Vulnerability Fixing Recommendations and Automated PR

AI will automatically generate targeted patch suggestions, developers can preview the repair code and implement “one-click Pull Request”. Functionally, Claude Code Security has been able to read code line by line, track the flow of data in applications, understand the interaction logic between different components, and identify complex security issues such as business logic defects and access control failures like human security researchers.

Anthropics open-sourced the claude-code-security-review project six months ago, which should be an early version of the tool. In addition, agentic-pbt, a project between Anthropic and Northeastern University in the United States, is also related to Agentic AI code security audits. We have extracted the following key technologies from related projects:

- Difference-aware scanning: Due to contextual limitations, only in-depth audits are performed on changes in PR, and integration efficiency is improved by combining relevant CI/CD flows.

- Multi-stage verification process: After a vulnerability is discovered, the model will start the filtering module. This module uses the model to perform secondary “refutation reasoning” and automatically filters out alarms that have no actual impact.

- Property-based testing: This technology was proposed in agentic-pbt. The red team dedicated agent can infer the proper code properties (Properties) from large projects and automatically generate test cases to detect logical boundaries. At present, it has successfully discovered and fixed vulnerabilities in multiple Python top-level packages.

Similarly, the improvement brought by the update of the model cannot be underestimated. Today’s release of Claude Code Security introduces the Claude 4.6 series model, which enables more powerful capabilities. According to the research report, in early testing, Claude needed the Incalmo custom toolset developed by the red team to simplify complexity. However, by Claude 4.6 version, the model can directly use standard open source tools such as Bash and Kali to perform autonomous reconnaissance and vulnerability mining in complex networks.

The adaptive thinking (Adaptive Thinking) technology introduced in Claude 4.6 has a certain effect on vulnerability mining. Adaptive thinking refers to the model’s ability to autonomously determine the depth of reasoning based on task complexity. Simple vulnerabilities respond quickly, while complex logical errors trigger the “Max Effort” mode and allocate more thinking tokens for deep digging. At the same time, the Claude 4.6 model provides a million-level context window, supporting up to 1,000,000 tokens (1M), and can understand a very large code base including historical patches, architecture documents and dependencies at one time.

Analysis of LLM Application and Code Audit Technology

Next, we will delve into how LLM reshapes the technical paradigm of code auditing and analyze its application boundaries and challenges in complex production environments.

2.1 Evolution of Technology Paradigm: From “rule-driven” to “semantic reasoning”

With the release of Claude Code Security, the application of LLM in the field of code security auditing has moved from the proof-of-concept stage to actual deployment. Compared with traditional static application security testing (SAST) tools, LLM-driven code auditing represents a change in the technical paradigm.

Traditional SAST tools (such as SonarQube, CodeQL, etc.) rely on predefined rule bases and pattern matching mechanisms to analyze code security by building abstract syntax trees (AST), data flow graphs (DFG) and control flow graphs (CFG). This method is stable in detecting known patterns such as hard-coded credentials, weak encryption algorithm identification, and simple injection vulnerabilities, but it is difficult to understand complex business logic, has limited analytical capabilities for cross-component interactions, and relies entirely on a manually defined rule base.

In contrast, LLM-driven code auditing is based on semantic understanding and reasoning capabilities. For example, Claude Code Security relies on the Claude Opus 4.6 model to understand the business intent and logical relationships of code like a human security expert. This “inference audit” model enables it to achieve breakthroughs in multiple dimensions: it can identify business logic vulnerabilities and unauthorized access defects that are difficult to define with traditional tools; significantly reduce the false positive rate through a multi-stage verification mechanism; generate accurate and directly applicable fixes; have language independence, greatly reducing deployment and maintenance costs.

From the perspective of technical architecture, traditional SAST is a “rule-driven” deterministic analysis, while LLM audit is a “semantic-driven” probabilistic reasoning. This difference determines the complementary relationship between the two in application scenarios: traditional tools still have advantages in compliance inspection, known vulnerability pattern scanning and detection efficiency, while LLM shows unique value in complex logic analysis and 0day vulnerability discovery.

2.2 Practical Cases and Evolution of Open Source Ecosystem

Anthropic’s internal testing showed that Claude Opus 4.6 autonomously discovered more than 500 high-severity vulnerabilities without specific guidance, many of which existed in open source projects that had been reviewed by experts for decades. The open source community is also actively exploring technologies related to LLM audits. As a research project accepted by ICML 2025, RepoAudit uses a multi-agent architecture to implement warehouse-level code auditing. It detects 40 vulnerabilities in 15 real projects with an accuracy of 78.43%, and the average cost per project is only US$2.54. The DrillAgent project solved the “illusion” problem in LLM audits by executing state awareness and iterative verification mechanisms, increasing the CVE reproduction success rate by 52.8%. In addition, projects such as SAST-Genius, YoAuditor, and Bugdar have demonstrated diverse application scenarios such as LLM integration with traditional tools, fast CI/CD integration, and PR-level auditing. These cases show that LLM audits have the ability to handle complex production code, and its ecosystem is evolving from a single model call to complex agent collaboration.

2.3 Core Capability Boundaries and Differentiated Application Scenarios

Although LLM has shown excellent evolution in semantic understanding, rationally defining its capability boundaries is the key to technology selection during enterprise-level engineering implementation. Based on this, we analyzed the applicable scenarios and technical limitations of LLM driver code auditing:

Applicable scenarios:

1. In-depth identification of complex business logic vulnerabilities: Traditional SAST tools are based on hard rules, making it difficult to understand the business intent behind the code. The core advantage of LLM lies in its semantic understanding ability obtained during the pre-training process, which can analyze logical risks such as “access control defects” and “business logic bypass”. In the “Order Information Acquisition” scenario, LLM can autonomously identify whether the code contains an unauthorized verification of the order owner and complete the verification logic based on the context. This inference ability based on business semantics is a dimension that traditional tools cannot reach.

2. Cross-file and cross-component complex link analysis: In modern software architecture, vulnerabilities are often hidden in the interactive chain of multiple modules and services. Traditional tools often face the problem of “path explosion” when performing inter-process analysis, which leads to interruptions in analysis. LLM, with its abstract reasoning capabilities, can identify high-risk interaction patterns without exhausting all paths. For example, the intelligent agent mechanism adopted by RepoAudit uses models to distinguish between relevant and irrelevant paths, which greatly improves the audit efficiency of large code bases.

3. Unknown 0-day vulnerability mining: Traditional tools rely on predefined vulnerability libraries and lack awareness of unknown patterns. Based on the learning of massive code patterns, LLM can identify potential risks that are similar to known threat structures but whose specific types are not defined.

Inapplicable scenarios:

Full scans of large-scale code bases are a major challenge facing LLM today. Although LLM has strong code understanding capabilities, its ability to handle long contexts is inherently limited. Even the latest models that support very long contexts have difficulty processing all the code at once when faced with large projects with millions of lines of code. Projects such as RepoAudit have partially alleviated this problem through the on-demand exploration and memory mechanisms of intelligent agents, but they still cannot reach the efficiency of traditional SAST tools when dealing with very large code bases. For enterprise-level application scenarios that require regular and comprehensive scans of the entire code base, LLM is currently more suitable as a supplement to traditional tools to detect blind spots rather than a replacement.

2.4 Key Technical Challenges and Future Breakthrough Directions

To achieve a truly “autonomous automated audit”, the industry still needs to make breakthroughs in the following core technical challenges:

- Context compression and dynamic code loading: For long code paths, future research focuses on how to balance inference accuracy with computational cost by dynamically loading relevant code fragments and retaining key data flow skeletons.

- Result verification and hallucination suppression: In order to solve the false vulnerability paths that may be generated by the model, the probability output of LLM is combined with deterministic formal methods, and effective vulnerability verification mechanisms are used to ensure the correctness of audit results.

- Private deployment and data compliance: Source code is the core asset of an enterprise. How to achieve “small model + high precision” audit capabilities on the edge side while ensuring privacy is a key breakthrough point for enterprise-level implementation.

- Enhanced defense against audit tools: With the popularization of automated audits, code-side adversarial attacks on LLM prompt injection will become more frequent. Establishing a robust defense mechanism to ensure that the audit model is not induced or deceived by malicious code will be standard for the next generation of security tools.

Evolution of Offensive and Defensive Patterns in the AI Era

Faced with increasingly complex, hidden and intelligent threat situations, intelligent offensive and defensive confrontation is becoming an inevitable trend in the development of the industry. In the field of network penetration, we have achieved end-to-end autonomous penetration capabilities. Through core technologies such as multi-agent collaboration, attack and defense knowledge accumulation, and full process automation, AI has not only become a powerful tool for vulnerability and risk discovery, but also provides key support for the verification and upgrading of the defense system.

Complex task deconstruction driven by multi-agent architecture

In the field of cybersecurity, it is often difficult for a single model to balance the contradiction between “efficiency” and “depth verification”, while multi-agent systems (MAS) simulate the working mode of human expert teams through role deconstruction. We implemented a multi-agent collaboration framework based on task phases nearly a year before Claude Code Tasks. In this architecture, the system is composed of multiple expert agents responsible for different mission phases and different specialized tasks working together. The robustness of the system no longer depends on the optimality of a single model, but on the quality of information exchange between agents and the efficiency of feedback loops, which effectively suppresses the “illusion” problem of LLM in complex attack and defense analysis. This architecture can decompose complex penetration testing tasks into executable atomic steps, so that AI has a significantly higher success rate than single agents when dealing with cross-protocol and cross-language combined vulnerabilities.

Long context dynamic orchestration and attention optimization

Code auditing and network attack and defense are essentially ultra-long sequence tasks. Even though Claude 4.6 has millions of contexts, it still faces the challenge of distraction when processing large amounts of command output or task logs. The NSFOCUS intelligent attack and defense team found in-depth observations of the LLM attention mechanism that the context arrangement order and information density directly affect the model’s reasoning accuracy for the attack link. To this end, we have developed an efficient dynamic context management engine:

- Refined compression and redundancy elimination: For security tasks, semantic dependency analysis is used to eliminate 80% of non-critical logic codes, retaining only the core skeleton related to data flow and control flow.

- Context reorganization technology: The “sliding window + key information pinning” strategy is adopted to ensure that the model always maintains a high sensitivity to key features during the inference execution process, ensuring the consistency and accuracy of task execution.

Dynamic knowledge injection and high-reliability call of tool chain

With the popularization of protocols such as MCP (Model Context Protocol), the threshold for AI to access security tools has been lowered, but with it come the problems of “tool overload” and “parameter drift”. When the agent is faced with hundreds of penetration testing tools, the call accuracy will decrease exponentially as the number of tools increases. The NSFOCUS intelligent attack and defense team has achieved key results in the fields of dynamic knowledge injection and on-demand tool loading:

- Dynamic routing mechanism: The system retrieves and injects relevant knowledge content from the tool library in real time according to the current task intention.

- Automatic calibration of complex parameters: The parameter constraint verification layer for security-specific tools is introduced, which improves the stability of AI to more than 95% when calling tools with complex command line parameters such as SQLmap and Nmap, achieving true “out-of-the-box use”.

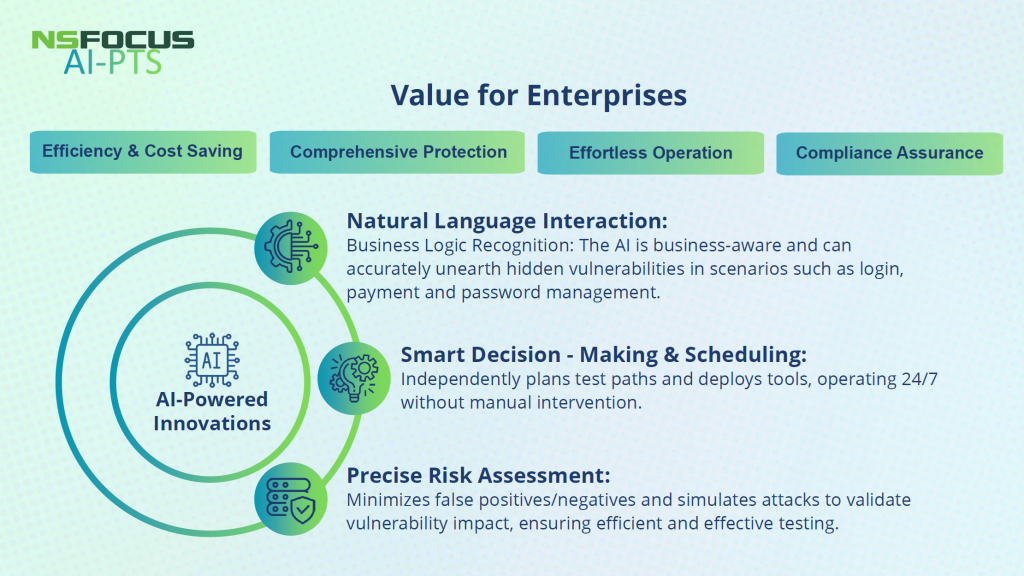

In recent years, NSFOCUS has continued to increase its investment in AI security research and development, launched the NSFGPT AI security capability platform, and built a “NSFGPT+DeepSeek” dual-model base and multi-agent ecosystem. As an important landing product of NSFOCUS “AI+Security”, NSFOCUS AI Automated Penetration Testing (AI-PTS) was officially released in August 2025. It endows autonomous AI agents with attacker thinking and practical experience, redefines the automation boundary and practical effectiveness of penetration testing. AI-PTS can autonomously complete the entire process from target discovery, path planning to vulnerability exploitation. Its advent has redefined the automation boundaries of penetration testing, shortening the in-depth assessment that originally required experts to complete for weeks to hours, and becoming an industry-recognized AI penetration testing benchmark product. This technological breakthrough not only highlights NSFOCUS’s leading position in the field of intelligent attack and defense, but also marks that enterprise security defense has entered a new stage of “autonomy, high frequency, and actual combat”.

Summary

The sharp drop in cybersecurity stocks caused by Anthropic’s release of Claude Code Security not only reveals the sensitivity and anxiety of the capital market in the face of AI technology changes, but also reflects the market’s collective cognitive reconstruction of the profound transformation of the security paradigm in the AI era. From the perspective of technological evolution, AI is fundamentally reshaping the offensive and defensive landscape of cybersecurity: AI-driven attack capabilities, which are doubling every six months, have made traditional defense methods gradually ineffective. Only by using AI to fight against AI can we maintain a basic level of security in the escalating confrontation. In other words, the security revolution in the AI era is no longer a future issue, but an accelerating reconstruction of reality.

The appearance of Claude Code Security may symbolize a more profound turning point-it does not represent a technological breakthrough for a single product, but a historic leap in the overall defense system towards intelligent and autonomous development. We need to use AI capabilities to better promote the high-quality implementation of active security with active discovery and continuous verification. Only by recognizing the nature of this paradigm shift can we truly grasp the essence and future of cybersecurity in the AI era.