In 2025, AI has evolved from being a tool that merely enhances the efficiency of attacks to becoming an integral component embedded within the execution phase of cyber operations. In the future, AI may even emerge as a pivotal enabler for attack activities.

During the initial attack phase, AI technology has significantly reduced the difficulty of breaching psychological defenses through social engineering. In November 2025, the threat group UNC1069 (MASAN) targeted the cryptocurrency industry by using AI-generated deepfake images and videos of management executives. By impersonating company leaders in real-time video conferences, they successfully deceived employees into performing malicious actions. The sophistication of these forgeries made it nearly impossible for victims to detect anomalies through visual or linguistic cues, completely bypassing traditional trust mechanisms based on human experience.

Beyond video deception, AI’s text-generation capabilities have also accelerated spear-phishing attacks. APT42 leveraged AI to craft highly convincing phishing emails, fine-tuning writing styles, grammar, cultural nuances, and even industry-specific terminology. This level of customization made the bait content appear far more professional and credible, reducing the likelihood of detection by recipients.

In the boundary penetration and internal network persistence stages, AI is used to generate malicious code and control attack execution. In 2025, samples in the wild demonstrated the use of AI to dynamically generate instructions. Attackers are employing LLMs as “real-time command generators” for malware, adapting instructions on-the-fly based on the environment and attack objectives. This dynamic approach renders static analysis ineffective in identifying critical behaviors. Furthermore, attackers are experimenting with local, private AI models to generate core malicious code, evading cloud-based security detections while dynamically producing essential attack functionalities.

AI is also being used for highly covert execution and decision-making control, with AI-Gated loaders representing an early form of “intelligent decision-making malware.” Before executing shellcode, these samples first collect system telemetry data—such as running processes, hardware characteristics, and environmental metrics—and submit this data to an AI model. The model then assesses whether the environment contains signs of sandboxing, EDR, debuggers, or other analytical indicators. If the model deems the environment suspicious, the malicious code may delay execution or refuse to run entirely. This AI-driven decision-making approach is far more flexible than traditional anti-sandbox techniques, as it no longer relies on fixed rules. Instead, it demonstrates contextual adaptability, significantly enhancing the malware’s stealth and persistence.

During the data exfiltration phase, attackers are weaponizing legitimate local AI tools to enable intelligent data theft. QUIETVAULT is a prime example of this trend. It leverages AI command-line tools already installed on the victim’s system, such as Gemini CLI or Claude Code, using natural language prompts to direct the AI model to actively locate sensitive files. These tools can quickly pinpoint critical files like private keys, SSH configurations, or cloud service credentials. The entire process closely mimics developers’ routine use of AI for code searches, making it extremely difficult to detect. This ” Living off the AI” (LOtAI) method is emerging as a highly covert attack vector.

At the same time, AI is weaponized to counter security analysis itself and achieve self-mutation to evade detection. As more security vendors are adopting AI for sample analysis and behavioral judgment, attackers are exploiting prompt manipulation to interfere with analysis results. By embedding fixed prompt injection content, attackers attempt to mislead AI-driven automated analysis systems, causing them to produce incorrect results or security ratings. This year, observed malicious samples have begun demonstrating early forms of “self-mutating malware.” These samples call large model APIs at runtime, rewriting their own code into functionally equivalent but structurally different versions, generating new code variants before each execution. Once mature, this technique could grant malware infinite polymorphism, rendering traditional signature-, pattern-, or TTP-based detection systems ineffective.

From Deepfake-driven credible social engineering to AI-generated text-based deception; from dynamic code generation to AI-Gated environment-aware execution; from abusing legitimate AI tools for local intelligent searches to future self-mutating malware—it is clear that AI has evolved from a supporting role into a core weapon and integral component of cyberattacks. The broader attack ecosystem continues to expand around AI’s evolving capabilities.

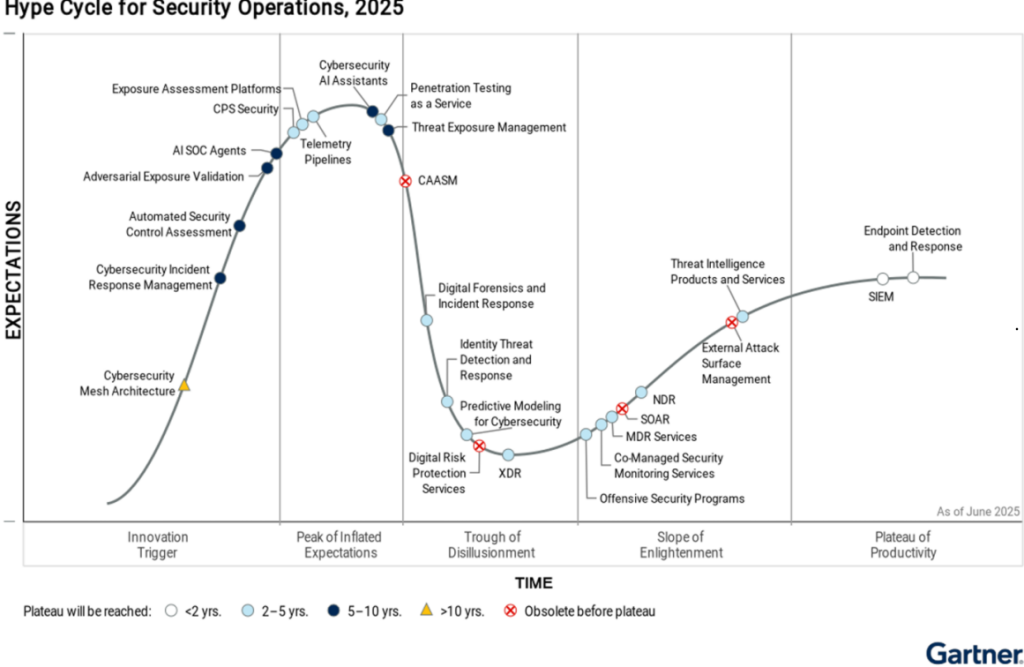

Gartner defines Adversarial Exposure Validation (AEV) as a technology system that continuously, coherently, and automatically provides evidence of attack feasibility. This results-oriented evaluation technique simulates attack scenarios and, based on implementation outcomes, demonstrates how potential attack methods can successfully breach enterprise defenses, bypass existing security protections, and evade detection mechanisms. AEV validates the real-world existence and exploitability of security vulnerabilities.